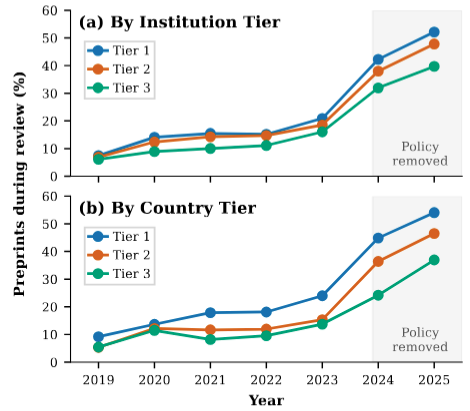

We examine how preprints and author recognition affect outcomes across institutional hierarchies after ACL removed its anonymity period. Tracking preprinting trends for publications, surveying NLP researchers, interviewing community members, and analyzing peer reviews, we find that elite institutions post preprints more frequently and that reviewer knowledge of authors inflates scores at elite institutions but not elsewhere, also lowering review quality.

Pranav A

I am Pranav, a PhD researcher at the University of Hamburg, where I work in Anne Lauscher's Trustworthy AI Group. I am a researcher, educator, and activist working at the intersection of AI ethics and NLP.

My full name is Pranav Agrawal, but I prefer to be called Pranav A. For academic publications I use the name A Pranav. I use he/him or they/them pronouns. You can reach me at cs.pranav.a (at) gmail.com.

Research Interests

My main research interest is inclusive AI policymaking, which sits at the intersection of AI ethics, NLP, ML, and HCI. My current work focuses on resisting surveillance AI through two complementary interventions: technical research that documents how these systems fail to do what they claim, and societal work that examines how AI washing lends surveillance an air of inevitability while building engagement with the communities most exposed to it. Alongside this, I continue earlier threads on multilinguality in computational linguistics and NLP.

Teaching

At the University of Hamburg, I teach Ethics and Modern AI, Text Analysis, and Trustworthy AI. I supervise bachelor's and master's theses and run student projects.

Publications

Below are some of the research papers I have worked on. My work has been published at top-tier conferences and received a best paper award.

A mixed-methods study combining surveys, interviews, and large-scale citation analysis of papers from eight major computer science venues from 2019–2025. We find that deadnaming of transgender researchers in citations decreased by 92%, and that venues with accessible and visible name change policies have significantly fewer citation errors compared to inaccessible policies.

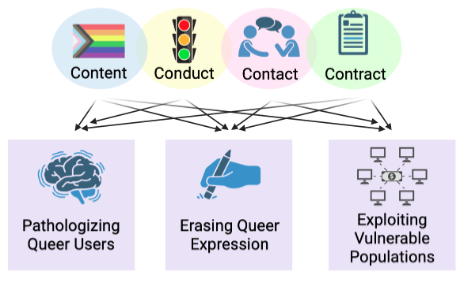

We present a participatory auditing workshop at EurIPS 2025 where 16 queer community members audited four case studies using the 4Cs harm taxonomy (Content, Conduct, Contact, Contract) applied across the AI lifecycle.

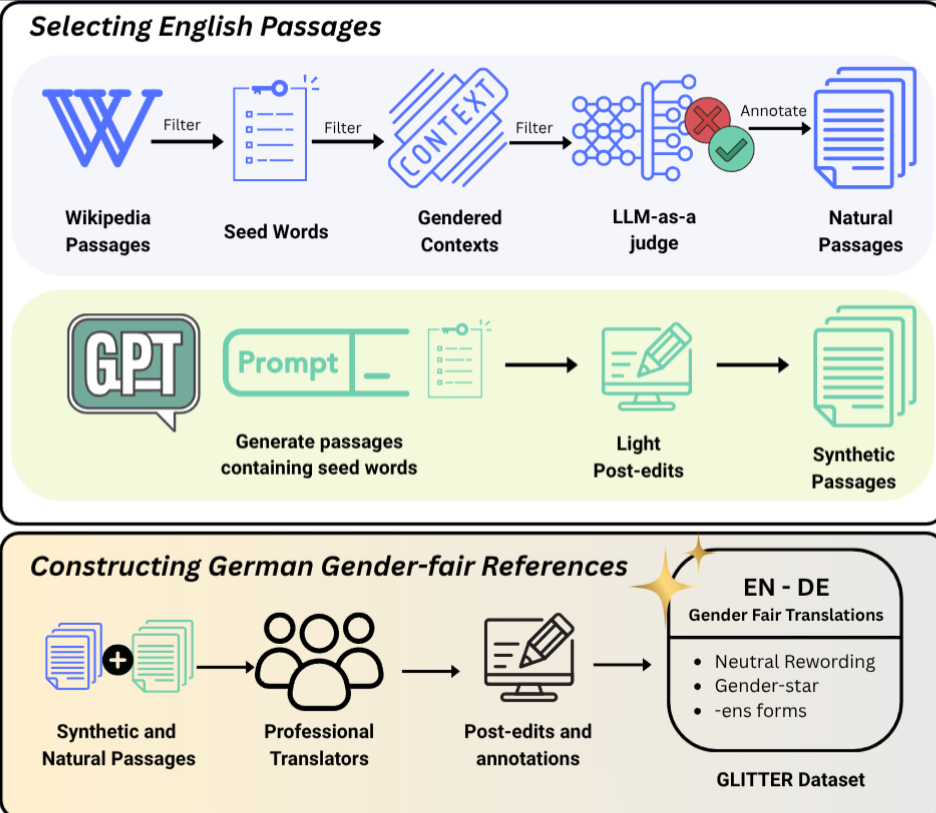

We introduce Glitter, an English-German benchmark featuring extended passages with professional translations implementing three gender-fair alternatives. Our experiments reveal significant limitations in state-of-the-art language models, which default to masculine generics and rarely produce gender-fair translations even when explicitly instructed.

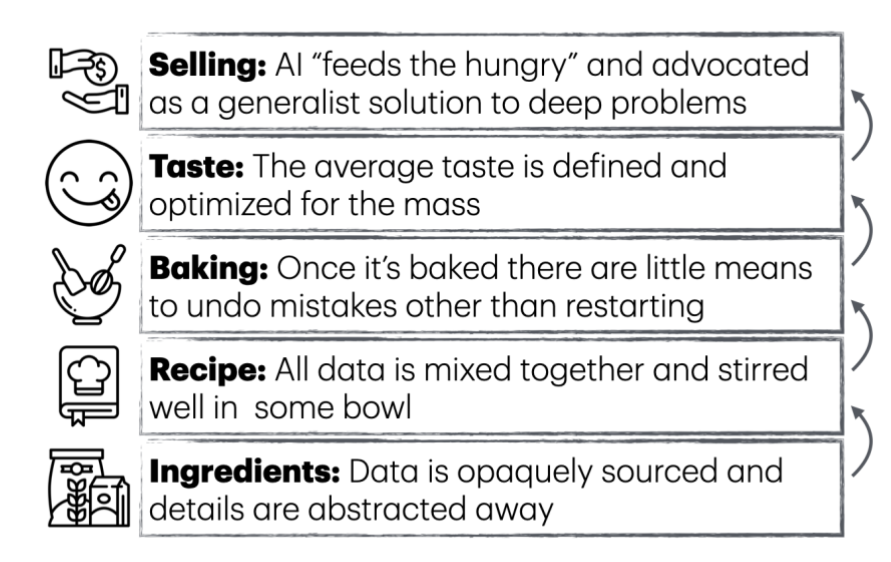

We expand Yann LeCun's 'cake that is intelligence' analogy to the full life-cycle of AI systems, from sourcing data to evaluation and distribution. We describe each step's social ramifications and provide actionable recommendations for increased participation in AI discourse.

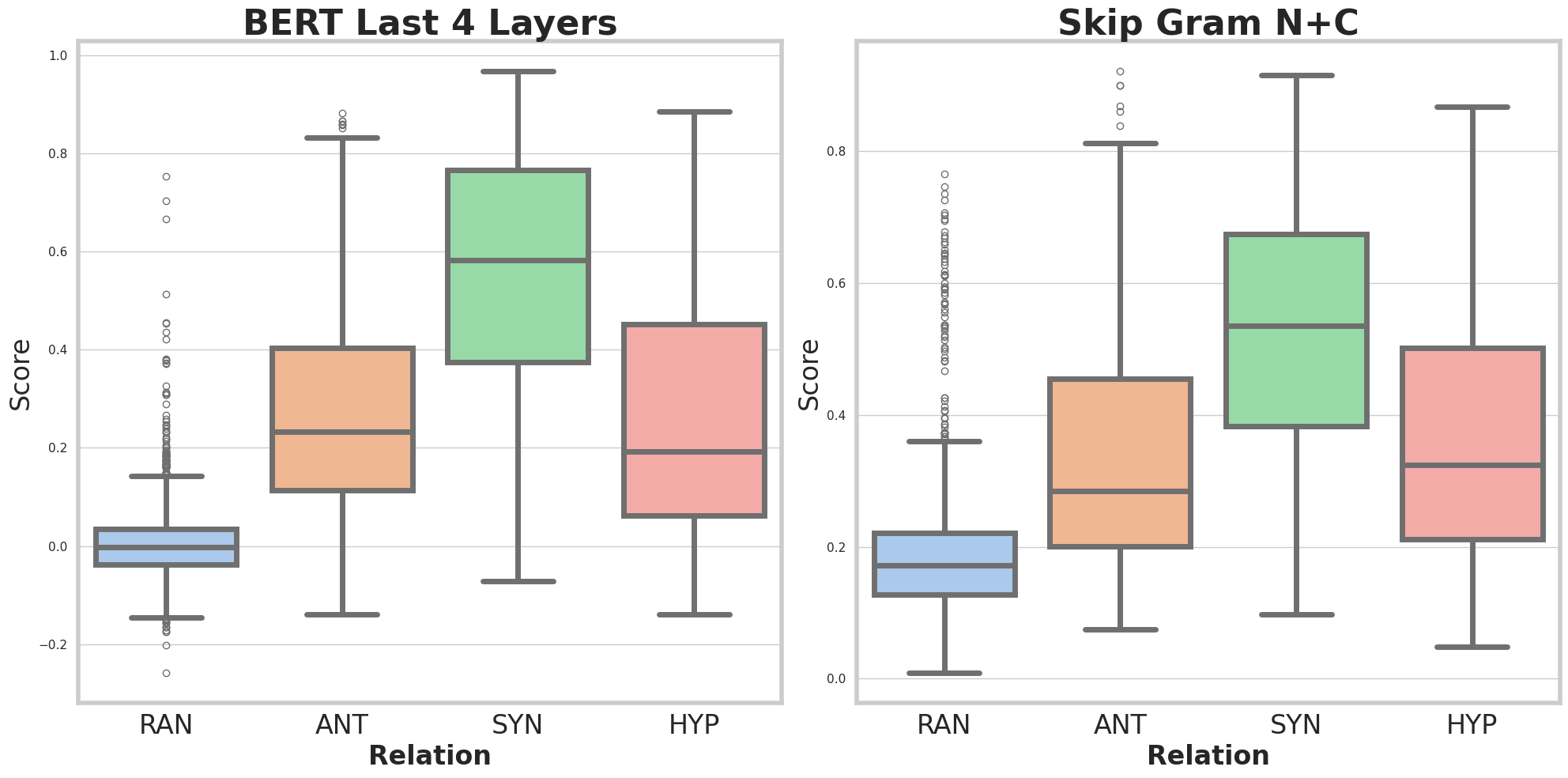

Emperical comparisions on character based models against word based models on common Chinese semantic benchmarks.

Community-led participatory design case study of Queer in AI contributed lessons on decentralization, building community aid, empowering marginalized groups, and critiquing poor participatory practices.

Queer in AI provides a tutorial for diversity & inclusion organizers on making virtual conferences more queer-friendly through inclusivity based on their community's experiences with marginalization.

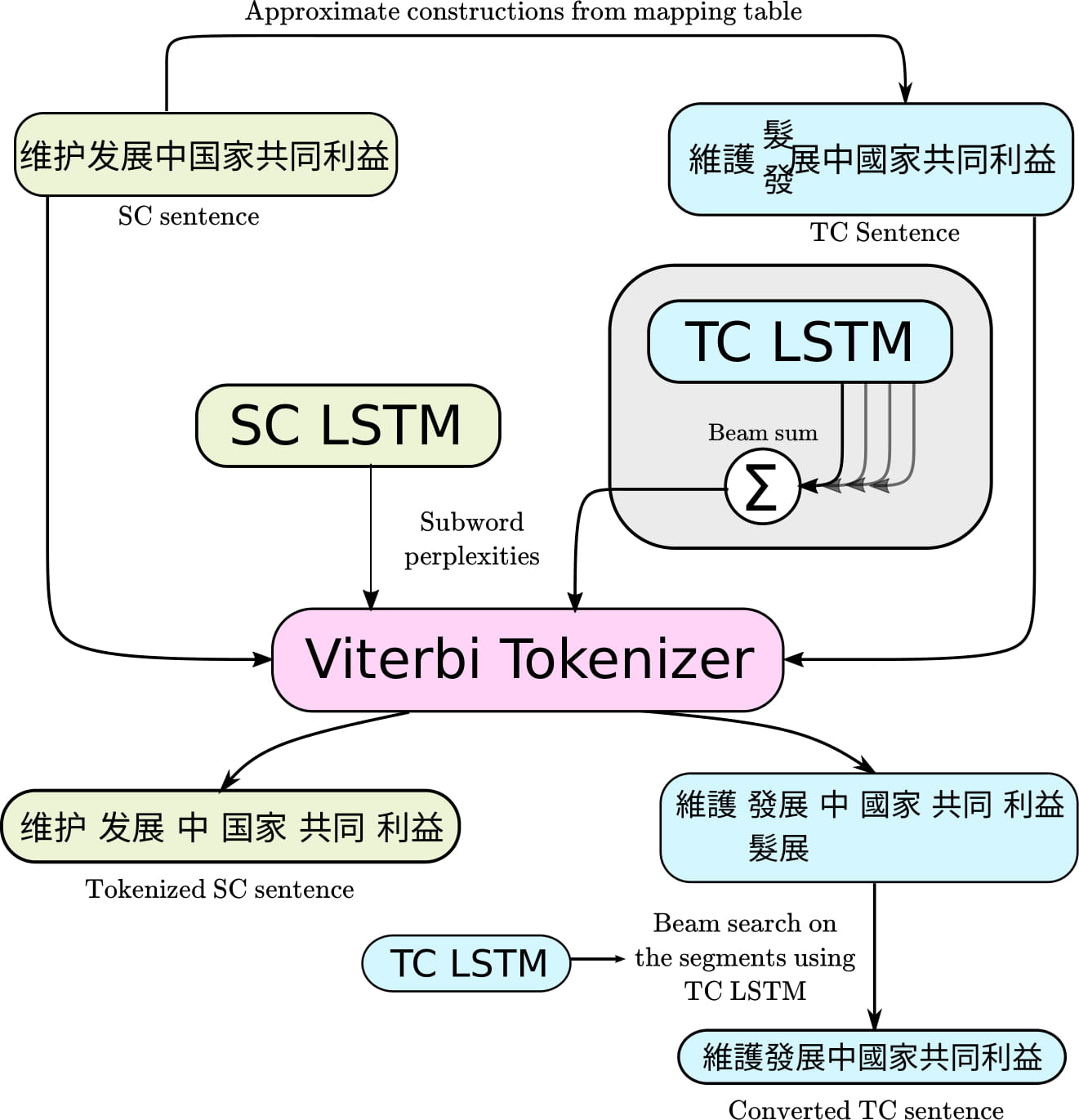

The paper contributes a contextual subword segmentation method along with benchmark datasets that outperformed previous Chinese character conversion approaches by 6 points in accuracy, especially for code-mixing and names entities.

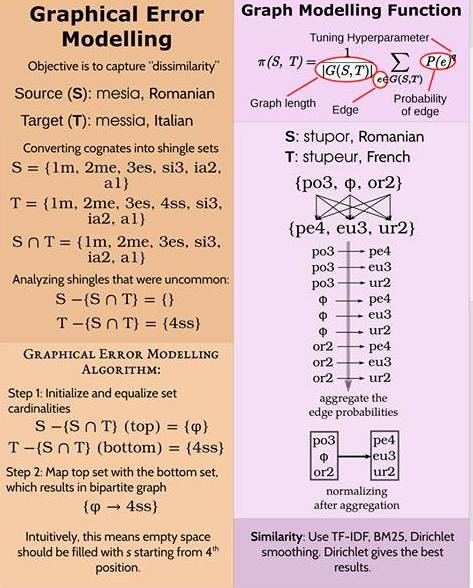

The paper contributes information retrieval ranking functions with heuristics like positional tokenization and graphical error modelling to the problem of cognate detection.

Volunteer Service and D&I Advocacy

I am a co-founder of Queer in NLP, co-organize the Identity-Aware AI workshop series, and have served as D&I chair at *CL conferences.

Miscellaneous

Outside of research, I do improv comedy and lead an improv group at UHH called Tupananchiskamas. If you would like to join us, shoot us an email.

I also teach meditation, in particular Insight Dialogue, a practice that brings mindfulness into conversation. If you are interested in learning, let me know.